Artificial intelligence has gone from a research curiosity to a foundational layer of modern business. But as the industry explodes, so does its vocabulary. Terms like AI data center, AI colocation, and AI edge are thrown around constantly—often as marketing shorthand rather than meaningful distinctions.

For founders, CTOs, and even seasoned infrastructure leaders, this creates a dangerous gap: decisions are being made based on jargon instead of understanding.

This article breaks through that noise. We’ll unpack what these terms actually mean, how they relate to each other, and—most importantly—how companies like Metanet Hosting can help early-stage and scaling AI companies turn infrastructure from a cost center into a strategic advantage.

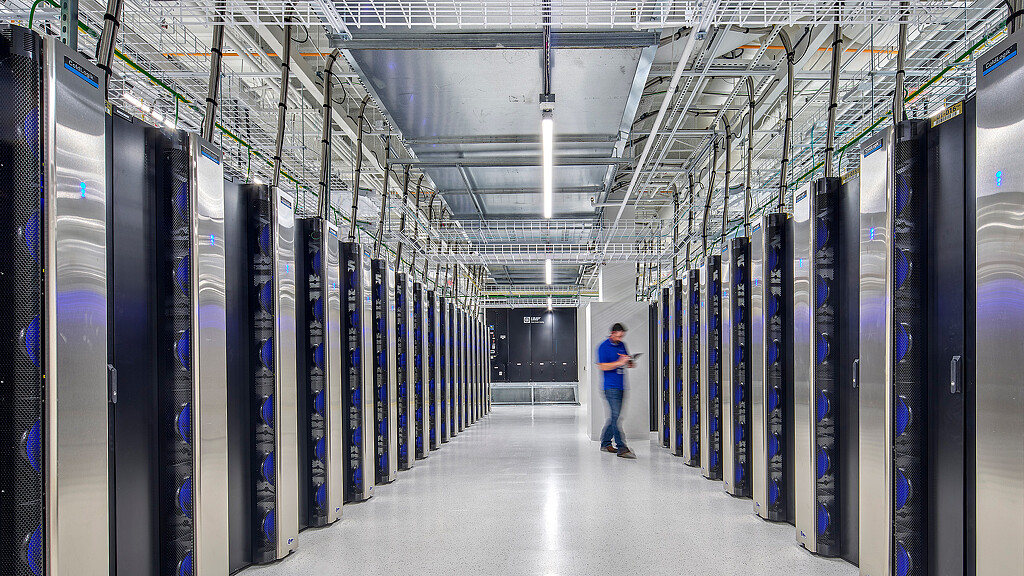

Part 1: The Foundation: What Is an AI Data Center Really?

At its simplest, an AI data center is just a place where AI workloads run. But that definition misses the real story.

The textbook definition

An AI data center is a facility designed to train, deploy, and run artificial intelligence applications, equipped with high-performance compute, storage, and networking infrastructure. (ibm.com)

These facilities are optimized for:

- GPU/TPU-based computation

- Massive data throughput

- Advanced cooling and power density

The reality behind the term

In industry conversations, “AI data center” often implies something more:

It’s not just a building, it’s a capability layer for intelligence production.

Think of it like this:

| Traditional Data Center | AI Data Center |

|---|---|

| Stores and serves data | Learns from data |

| CPU-heavy workloads | GPU/accelerator-heavy |

| Predictable usage | Explosive, variable workloads |

| Static applications | Constant model iteration |

AI data centers exist because modern AI, especially large models, requires:

- Extreme compute density (often 5–10x traditional racks) (Wikipedia)

- High-speed interconnects between thousands of chips

- Sophisticated cooling systems just to keep hardware operational

What people don’t say

Here’s the truth rarely stated in marketing:

Most companies should not build their own AI data center.

Why?

- Capital costs are enormous

- Power and cooling are major constraints

- Expertise is rare

- Utilization is often inefficient

This leads directly to the next concept: AI colocation.

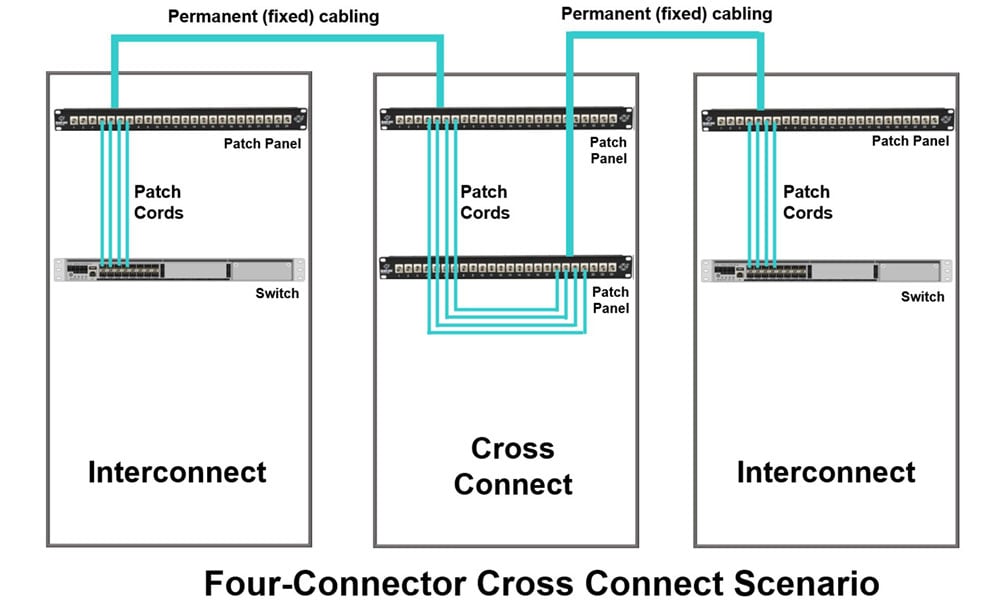

Part 2: AI Colocation — The Most Misunderstood Term in AI Infrastructure

The textbook definition

Colocation means renting space, power, cooling, and connectivity in a shared data center facility instead of building your own.

For AI specifically:

- Companies bring their own GPU servers

- The facility provides infrastructure (power, cooling, bandwidth)

The real meaning in AI

AI colocation is not just “renting space.”

It is outsourcing the hardest parts of AI infrastructure while retaining control of your compute.

This is crucial.

Unlike cloud:

- You own the hardware

- You control performance and cost over time

- You avoid unpredictable usage pricing

Unlike building your own data center:

- You avoid massive upfront capital

- You gain immediate access to enterprise-grade infrastructure

Why AI colocation is exploding

Modern AI workloads require:

- High power density (40kW–100kW per rack) (Wikipedia)

- Advanced cooling systems

- Reliable network interconnectivity

Few companies can support this internally.

So instead, they colocate.

The hidden truth

AI colocation is often marketed as “just hosting.”

But in reality:

It’s a strategic decision about control vs. convenience.

Companies choosing colocation are often:

- Scaling beyond cloud cost efficiency

- Running persistent workloads (training or inference)

- Building proprietary AI infrastructure

And importantly…

They are future-proofing their compute stack.

Part 3: AI Edge — Where the Real World Meets Intelligence

The textbook definition

Edge computing processes data closer to where it is generated, rather than sending everything to centralized data centers. (Metanet Hosting)

The real meaning

AI edge is not about infrastructure—it’s about time.

Specifically: how fast decisions need to happen.

Examples:

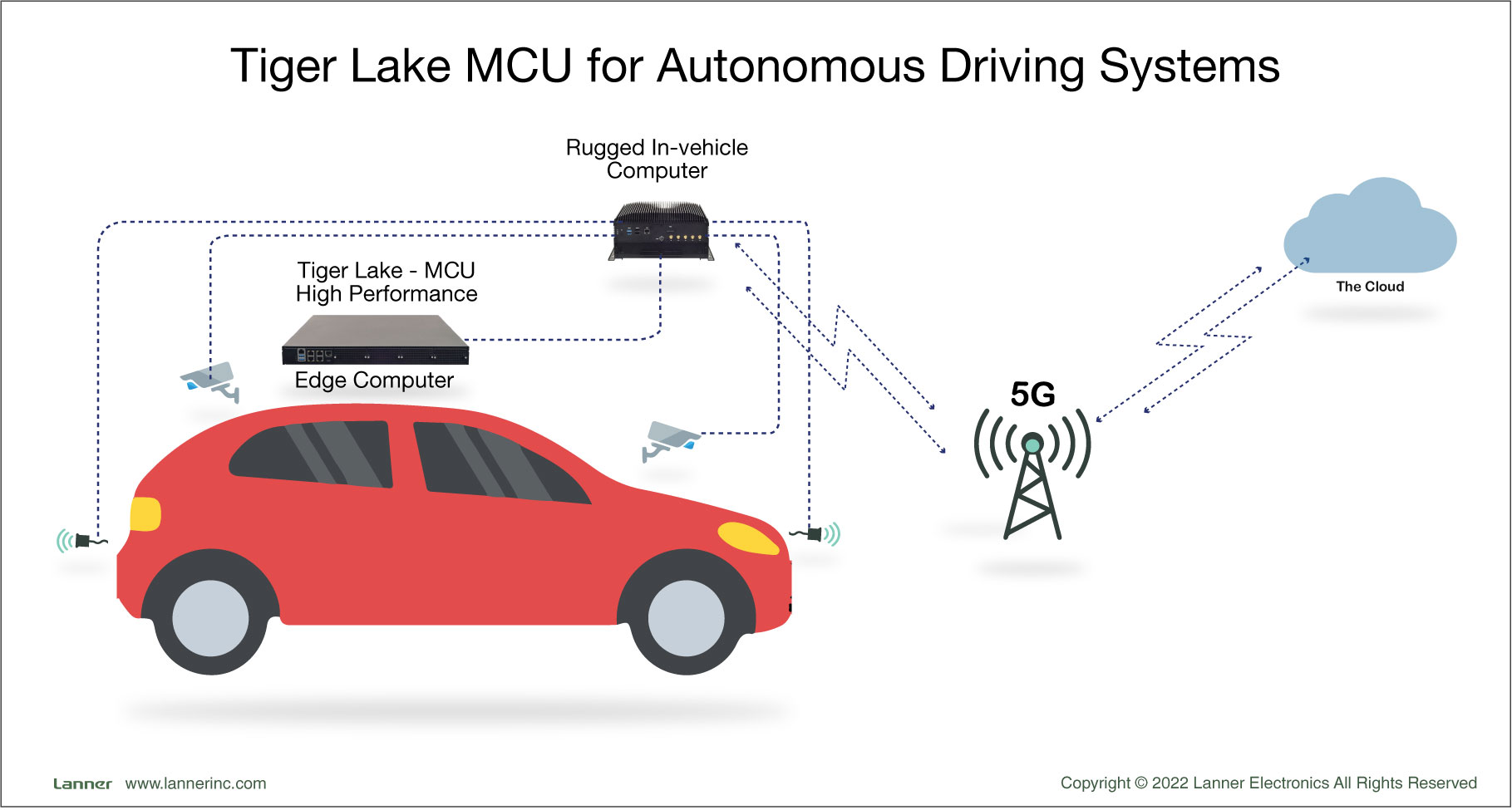

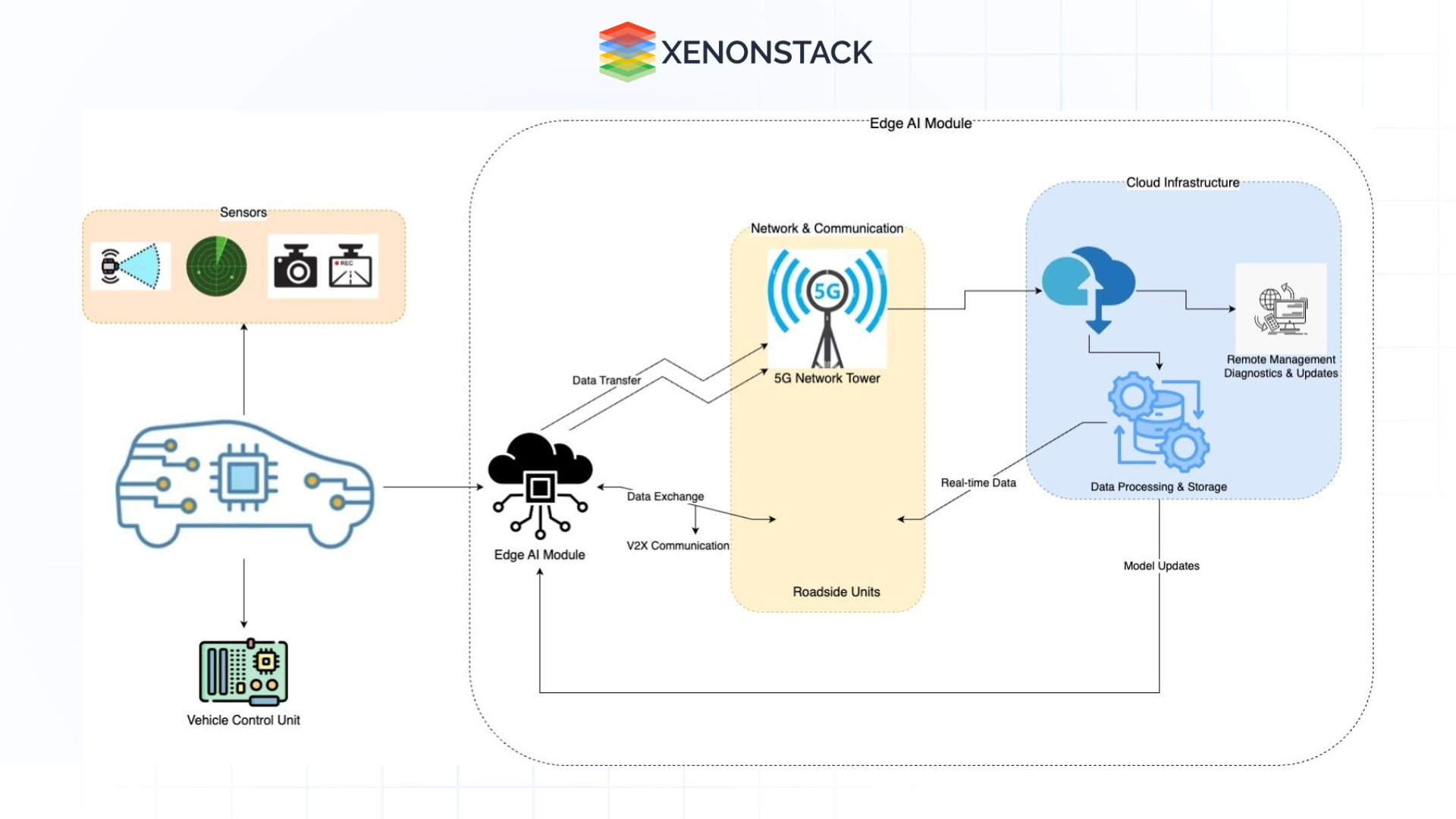

- Autonomous vehicles

- Industrial robotics

- Real-time fraud detection

- Healthcare monitoring

In these cases, sending data to a distant data center introduces unacceptable delay.

Even 50–100 milliseconds can be too slow.

The deeper insight

AI edge represents a fundamental shift:

| Centralized AI | Edge AI |

|---|---|

| Train & infer in one place | Train centrally, infer locally |

| Latency tolerant | Latency critical |

| Bandwidth heavy | Bandwidth optimized |

| Cloud-first | Hybrid/distributed |

In practice:

- Models are trained in AI data centers or colocation facilities

- Then deployed at the edge for real-time inference (RCR Wireless News)

What people get wrong

Edge is often marketed as a replacement for data centers.

It’s not.

Edge and centralized infrastructure are complementary, not competitive.

The future is hybrid:

- Train centrally

- Deploy globally

- Infer locally

Part 4: How These Three Concepts Actually Fit Together

Let’s cut through the confusion:

The AI infrastructure stack (real-world view)

- AI Data Centers

- Where models are trained

- Heavy compute, centralized

- AI Colocation

- How most companies access that power

- Shared infrastructure, owned hardware

- AI Edge

- Where models are used

- Distributed, low-latency execution

A simple analogy

Think of AI like a movie industry:

- AI Data Center → Film studio (where movies are made)

- AI Colocation → Renting studio space instead of building your own

- AI Edge → Movie theaters worldwide (where content is consumed)

Part 5: The Industry Jargon Problem

The biggest issue today isn’t technology—it’s language.

What vendors say vs what they mean

| Term | What it sounds like | What it actually means |

|---|---|---|

| “AI-ready data center” | Cutting-edge AI facility | Has GPUs and power capacity |

| “Edge AI platform” | Full AI system | Often just deployment layer |

| “Colocation solution” | Simple hosting | Complex infrastructure partnership |

This confusion leads to:

- Poor architecture decisions

- Overspending on cloud

- Underutilized hardware

- Misaligned scaling strategies

Part 6: Where Metanet Hosting Fits In (And Why It Matters)

Now let’s get practical.

For early-stage and scaling AI companies, the biggest challenge is not models—it’s infrastructure decisions.

This is where Metanet Hosting becomes interesting—not just as a provider, but as a strategic layer.

Based on their positioning around interconnectivity and infrastructure, here are five ways they can act as a force multiplier for AI companies:

1. Lowering the Barrier to Entry for AI Infrastructure

Most startups cannot:

- Build data centers

- Secure high-density power

- Design cooling systems

Metanet enables:

Access to enterprise-grade infrastructure without enterprise cost structure

This aligns with the broader industry trend where colocation reduces the need to build facilities from scratch.

2. Acting as a Neutral Advisor (Not Just a Vendor)

Many infrastructure providers push:

- Their cloud

- Their stack

- Their ecosystem

But emerging AI companies need:

- Guidance on architecture choices

- When to use cloud vs colocation vs edge

- Cost-performance tradeoffs

A company like Metanet can act as:

A free strategic advisor layer, helping founders avoid costly mistakes

3. Enabling Hybrid AI Architectures

Modern AI is not single-location.

It’s:

- Centralized training

- Distributed inference

- Multi-region deployment

Metanet’s focus on interconnectivity supports:

Seamless movement between data centers, clouds, and edge environments (Metanet Hosting)

This is critical for:

- AI SaaS platforms

- Robotics companies

- Real-time analytics startups

4. Helping Companies Escape Cloud Cost Traps

Cloud is great for:

- Prototyping

- Early experimentation

But at scale:

- GPU costs become unpredictable

- Data transfer costs explode

- Long-term margins suffer

Colocation via providers like Metanet enables:

Predictable, controllable cost structures

Which is essential for:

- Companies training models continuously

- AI inference platforms with steady demand

5. Serving as a Long-Term Infrastructure Partner

AI companies don’t just need servers.

They need:

- Growth planning

- Capacity forecasting

- Network design

- Geographic scaling

Metanet can function as:

A long-term infrastructure partner, not just a hosting provider

This is especially valuable for:

- First-time founders

- Teams without deep infrastructure expertise

- Companies transitioning from cloud to owned compute

Part 7: The Big Takeaway — What This All Means

Let’s simplify everything:

- AI Data Center = Where intelligence is created

- AI Colocation = How most companies access that power

- AI Edge = Where intelligence is applied

And the real insight:

AI infrastructure is no longer a backend decision—it’s a competitive advantage.

Companies that understand:

- When to centralize

- When to distribute

- When to own vs rent

…will outperform those that don’t.

Final Thought

We are entering a world where:

- Compute is strategy

- Latency is product experience

- Infrastructure is differentiation

The winners in AI won’t just have better models.

They’ll have better infrastructure decisions.

And increasingly, that means working with partners—like Metanet Hosting—who don’t just provide space and power, but clarity in a space full of noise.

If you’re building an AI company today, the real question isn’t:

“Should we use cloud, colocation, or edge?”

It’s:

“How do we combine them intelligently to win?”